Copify, Nublue and quality copywriting

Earlier this month, web developers Nublue published this article, which purports to test various copywriting ‘resources’. The survey pits a freelance copywriter, Craig Wright, against four content mills: Copify, Text Broker, Content Brokers and Odesk.

Craig, who trades as Straygoat Copywriting, was named in the first version of the article. At his request, it was edited to remove his name, but he ‘outed’ himself anyway by referring to the post on Twitter.

Conflict of interest

Several comments on the blog note the existing relationship between Nublue and content mill Copify, which comes top in the test.

The facts are as follows.

- Copify was co-founded by a former Nublue employee

- Copify operates from the same building as Nublue, and staff from the two firms know each other personally (confirmed in comments on the post)

- Copify is a supplier to Nublue

- Copify was nominated by Nublue for a Mashable award.

I’ll leave it to you to decide whether this constitutes a conflict of interest for Nublue, and whether the content delivered by Copify for the test was likely to be representative of its usual standard. (Craig was made aware that his copy was being used for a test, so it’s reasonable to assume that Copify also knew.)

The challenge

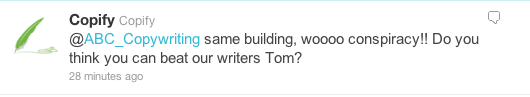

Shortly after the Nublue article appeared, I noted the points above on Twitter and received this reply from Copify:

As a rebuttal of the bias charge, it seemed rather thin; as a challenge, it seemed irrelevant. The real question is not whether I’m better than Copify’s writers, but whether Craig Wright is – despite what the Nublue test says. And that’s the focus of this article.

Scope of the test

Nublue asked for a 300-word blog post reviewing the beta version of Internet Explorer 9.

It rated the content providers in five areas, awarding scores out of ten in each category to arrive at a total score out of 50:

- cost

- ease of placing order

- speed of service

- usability of website

- quality of the finished article.

Copify and Text Broker came first and second with 49/50 and 46/50 respectively, with Craig in third place on 31/50. The other two content mills scored 9/50 and 4/50 because of problems with their service that don’t concern us here.

Category selection

Nublue’s assessment method, while perhaps even-handed prima facie, is actually highly questionable – because the choice of categories is arbitrary, and the weightings given to them are unbalanced.

For a content mill, ‘usability of website’ is key; for a freelance copywriter, it’s marginally relevant at best. A freelancer’s site is a portfolio with an email address attached; it has no real functionality.

Similar observations could be made on ‘ease of placing order’ and ‘speed of service’. A freelancer is unlikely to offer instant online ordering, because they’d want to discuss the brief before submitting a price. Similarly, an in-demand freelancer might not be able to turn a commission round in 24 hours, but their clients are willing to wait in order to get the writer they want.

Including these three categories inevitably biases the results towards content mills; in effect, the categories imply a preference for a Copify-style content service before we even get to the scoring. A fairer test would also reflect the strengths that freelance copywriters offer: listening, developing the brief, making helpful suggestions, responding to feedback, developing an understanding of the client’s business and so on.

Category weightings

Even if we give Nublue’s choice of categories the benefit of the doubt, there’s still a major problem with the weightings they’ve been given. Each category is evenly weighted (ten points), but four of the five categories focus on process rather than product, making the weighting given to quality far too low.

For example, are ‘usability of website’ and ‘ease of placing order’ really just as important as ‘quality of the finished article’? And is the way copy is ordered and delivered really three times as important as the actual quality of that copy, as the combined weighting of ‘usability of website’, ‘ease of placing order’ and ‘speed of service’ (30 points) implies?

In this model, a content provider could potentially deliver completely unusable content but still score 40/50 because they did it quickly, cheaply and efficiently. This seems like a case of ‘the operation was successful, but the patient died’.

Alternative assessment method

We could use a scoring method that gives equal weight to cost, quality and service. While this is also arbitrary, it does reflect the methods used elsewhere, for example at FreeIndex (customers score providers out of five for value for money, service and quality).

However, I would argue that, in copywriting specifically, quality is far more important than service and price. I’ll develop my argument more fully in a future post, but for now I will simply observe that the commercial and economic value delivered by (for example) a blog post depends on its power to generate social-media interest, attract backlinks and build authorial reputation – all of which are directly related to its quality.

Therefore, I propose a higher weighting of 30 points for quality, with cost and service together accounting for a further 20 points, to give this model:

- Cost: Score out of 10, as now.

- Service: Score out of 10, based on 3.3 points each for ease of placing order, speed of service and usability of website and calculated by adding Nublue’s scores in each area together and dividing by 3.

- Quality: Score out of 30 (calculated by multiplying Nublue’s score by 3)

As before, this gives a total score out of 50.

The table below shows how the three medal-winners would score under this new system, using Nublue’s own scores.

| Copify | Text Broker | Craig Wright | |

|---|---|---|---|

| Cost | 9/10 | 8/10 | 4/10 |

| Service | 9.3/10 | 8/10 | 6/10 |

| Quality | 27/30 | 21/30 | 27/30 |

| Total | 45.3/50 | 37/50 | 37/50 |

Assessment of quality

When I caught up with Craig, I found him understandably ‘angry’ at the results of the test. While he’s realistic about the way his costs and service stack up against the content mills, he takes issue with the evaluation of the end product.

‘I don’t care about being seen as expensive or slower,’ he said. ‘But what I don’t agree with is that the end result is of the same quality. As I stated in my comments on the blog, there is no way that Copify article can be seen as an objective review. It may as well have been written by Microsoft’s PR department.’

The Nublue brief is very clear: it asks for a review of IE9, not just coverage. The text of the brief includes these words (my italics):

This article will be aimed at Internet professionals and webmasters that may already be using IE9 or thinking about trying it.

The article should review Internet Explorer 9 with a focus on its performance and functionality for web users and developers. The article should make comparisons with other browsers that are available such as Firefox that are currently seen to have stronger developer ecosystems and stands [sic] compliant features.

While the Copify piece uncritically lists the features of IE9, press-release style, Craig’s expertly probes the areas where IE9 needs to prove itself to the web-developer community. Is it really fair to give both articles the same score?

‘It would have been good if some developers looked at the articles and commented, because I know which one they would have disliked the most – the pro-IE9 [Copify] one!’ laughs Craig. ‘Considering that developers were part of the target market, you’d expect something in the Copify article to provide them with a bit of info about how it is going to affect them. Would an IT expert/web developer have considered the Copify article was well researched and thorough? I doubt it!’

A commenter on the Nublue blog echoes these sentiments:

I did find it interesting that the Copify article and the freelance writer were ranked equally. I’m not even sure that the Copify article responded to the prompt. No evaluation of performance and functionality for web developers, no substantive comparison to other browsers, especially in the context of compatibility and developer ecosystems and no real consensus on whether IE9 is a competitor (“a step in the right direction”). Moreover, it’s technically inaccurate where there is substantive analysis.

To get an impartial developer’s perspective, I asked Gareth Thompson of Codepotato for his views on the three articles, as well as his score out of 30 for each. The texts were sent in a Word document with no accompanying details, and I didn’t outline the scope and intention of this post. As far as possible, it was a ‘blind’ assessment – a copywriting ‘Pepsi challenge’.

Gareth rated the Copify article 15/30, Text Broker 19.5/30 and Craig’s piece 25.5/30. ‘I think that article B [Craig] is a better article from the technology or “capabilities” point of view, as it explains the improvements that most web developers will want to know about,” he said. ‘Article C [Text Broker] just seems to miss the mark a little. Personally, out of the three articles I would have been more inclined to bookmark/recommend article B [Craig].’

When we plug Gareth’s scores into the more balanced scoring system, here’s what we find:

| Copify | Text Broker | Craig Wright | |

|---|---|---|---|

| Cost | 9/10 | 8/10 | 4/10 |

| Service | 9.3/10 | 8/10 | 6/10 |

| Quality | 15/30 | 19.5/30 | 25.5/30 |

| Total | 33.3/50 | 35.5/50 | 35.5/50 |

So there you have it – a much more balanced outcome. But I’m giving the final honours to Craig, for three reasons. Firstly, because Nublue marked him down on speed of service just because he was on holiday when they approached him. Secondly, because his article has the all-important social-media appeal that would have delivered true long-term value to the client – as Gareth confirmed in a blind test. And finally because he came top, convincingly, on quality – which should surely be the ultimate deciding factor.

Conclusion

I’ll leave the last word to Craig himself. ‘For an in-depth and considered view that gives your readership the answers they are looking for and adds value to your site, you’re better off with a freelance copywriter,’ he says. ‘One who takes the time to look past the press releases and investigate the real issues, concerns etc. – a process that takes more than an hour, and so costs more than £15!’

In a future post, I’ll expand on this point, explaining exactly why quality of content is so important – and why you ignore it at your peril.

Comments (12)

Comments are closed.

I wondered how long it would take you to create a riposte to this Copify nonsense. I particularly liked their tweeted reply, as highlighted in this post, conspiracy theories et al.

It’s certainly not a coincidence, whatever way you look at it. The two parties have a clear link and thus there’s an evident conflict of interest. Although admittedly I would have been duped if you hadn’t flagged up the news story from way back when during the original Twitter exchange.

It was also a pretty poor blog post on their part in the first instance, if that was an advert for Copify I’d certainly walk the other way.

Copify are chancers at the best of times, this is just further evidence of that. I look forward to seeing how they respond to the charges; will they lay down the gauntlet to you again? Will you accept if they do Tom?

Tom – very impressed that you managed to wade through the methods and data behind this dubious challenge without resorting to slinging mud.

Fair, incisive and rather entertaining – Great work!

[…] This post was mentioned on Twitter by Tom Albrighton, Infinity and Paul Mallaghan, Steve Logan. Steve Logan said: RT @ABC_Copywriting – Copify, Nublue and quality copywriting http://goo.gl/fb/KQ3SF Excellent, look forward to seeing how @copify respond […]

Hi Tom,

I’d like to address some of the points you raise in this post if I may. Not because I want to argue with you, I think we’re past that, but because I want your readers to be in possession of all of the facts.

Firstly, your ‘conflict of interest’ argument doesn’t really tell the whole story. Yes Rob used to work for NuBlue and yes Copify and NuBlue are based in the same building, but what you don’t mention is that Rob’s company MJR Web are in direct competition with NuBlue as a web development agency. Believe me when I tell you, there is absolutely no incentive for them to issue a biased review.

First of all, your assertion that Textbroker’s copy is of a higher standard than Copify is simply not true. The review clearly demonstrates that the US offering is littered with spelling mistakes and grammatical errors.

In terms of ‘ease of use’, you have neglected to mention that the transaction with the freelancer required no fewer than 12 emails before completion, something which NuBlue referred to as “time intensive.”

Why were so many emails needed? I would have thought that the client at least deserved the courtesy of a phone call, seeing as they were paying such a premium for his services. In terms of speed, the freelancer took around 10 days to deliver, Copify 5 days. Again, this failed to justify the premium he charged.

The freelancer’s argument that he was billing based on ‘research’ is contradicted by the fact that he asked the client to provide him with sources of information:

“I was surprised when he asked me to recommend sites for information on IE9, Firefox etc. As a technical writer I would have hoped he did not require my help in researching the topic.” This is particularly galling.

Perhaps this particular freelancer has done your profession a disservice, but I think most rationally minded people would agree that the amount he charged (£150) does not justify the end product. Particularly when you consider that the Copify content came in at1/10th of this.

Thanks for taking the time to perform your own test. It is interesting to see the comparitive results and nice to see that it has been handled professionaly. I would like to reiterate we do know Copify but this test was conducted professionaly and fairly, and we told Craig that it was a test out of respect for his business, the other content mills were not informed of the test. I chose to nominate Copify for a Mashable award because they asked us too, we are not trying to hide that we do have a relationship with them.

It would probably be fair to also say that although we have used Copify ourselves in the past, we do prefer to write content in-house, and have also used professional writers. So this is not a “you should use Copify for all copy” campaign, as we don’t.

I think it does matter about the freelancers site, turnaround time etc, we are a company and working all the time, so waiting is losing money.

Thanks again for your response, it’s an interesting debate.

Elly – NuBlue

I’m not sure, whatever the manipulated outcome, that testing someone’s ability to write a review about a new bit of technology is, strictly speaking, a pure copywriting challenge anyway is it? How many of Copify’s 2p a word writers out there are qualified technical authors/equipped to take on the challenge of writing such pieces, and if they are, why would they be doing it for 2p (or maybe 4p) a word? (As well as technology articles, Copify have jobs for cat food, mens clothes, women’s dresses and all sorts on there) As more of a creative writer I would struggle to write an authoritative/informed technology review. In fact I wouldn’t even take one on. The ability to review technology is surely an art in itself which presumably requires some kind of technical nous, no? A better test would be to put the 2p a word writer up against a sensible rates freelancer on a subject that doesn’t require a certain level of technical expertise but demands true creativity rather than an ability to report the facts by reviewing a bit of technology.

[…] https://www.abccopywriting.com/blog/2010/10/28/copify-nublue-quality-copywriting/ blog comments powered by Disqus /* */ /* */ /* */ […]

As the copywriter/technical author who wrote the piece in question, I would like to point out that my fees are not based on per word, they are based on the time taken to research and write a piece. I think the client doesn’t really appreciate the complexities and issues surrounding IE9, and just sees the end result as a 300 word article vs a 300 word article. They don’t seem to understand the technical issues with IE9 and so can’t see what the Copify article fails to do. Because of my technical background, I could approach the article from the developers’ perspective, something I just don’t think the client appreciates.

Now, if i were writing a 300 word article about cat food, then yes, the chances are that my fee would have been about £50. But we are talking a technical subject that requires more reading and expert knowledge. I don’t know the ins and outs of IE9, but I know how to find out what developers need to know about it, and that is the key point for a meaningful review.

Finally, the client seems to suggest that they did all the research for me, which is nonsense. I spent hours contacting web developers, posting on forums etc. to make sure the content I produced was accurate and of value to the target audience. The links provided by the client were just a starting point, nothing more.

I’d like to thank Tom for writing this piece. And just for the record, prior to this Copify project, I had no knowledge or experience of ABC Copywriting at all 🙂

@Martin

I didn’t ‘assert’ anything regarding the quality of the copy. I asked an impartial third party to evaluate the samples in a blind test and published the results. Was the Nublue test blind?

Regarding errors in the Text Broker piece, you can’t have it both ways. In the comments on this post on your site http://blog.copify.com/post/the-long-awaited-case-study/ you cheerfully acknowledged that errors in your own copy wouldn’t affect its publication. In this case, the errors didn’t bother my reviewer – who is a member of your target audience.

@Rob McVey (comments unapproved)

Your comments were not approved because they were ad hominem attacks on Craig. If you would like to write something civil and constructive relating to the quality debate, I’ll gladly publish it.

[…] an earlier post, I analysed Nublue’s survey of copywriting resources, arguing that freelance copywriters […]

Excellent article, Tom. You should get a job on Watchdog.

I think Copify’s childish, tweeted reply (“woooo conspiracy”) tells you all you need to know about this company.

It’s also pretty obvious that there was a clear – and pretty major – conflict of interest in the client chosen to test the articles. But that’s nothing new for Copify. The ‘long-awaited case study’ on their site was also for the web development firm that built their website.

@Tom – So are you now saying that it is OK for copy to have grammatical errors and spelling mistakes?

@Integral – How is there a conflict of interest in our case study? Rob used Copify to create a press release which was deemed to be fit for publication on a authoritative news site. Job done.